For a long time, conversational AI was treated as something relatively simple, almost decorative in the context of a digital product, where adding a chatbot to a website or application felt like a small but visible improvement that signaled innovation without forcing anyone to rethink how the business actually operated underneath.

That approach made sense at the time, because early conversational systems were limited in what they could realistically do, and their role was mostly confined to handling predictable interactions such as answering common questions or guiding users through basic flows, which meant they could exist on the surface of a product without deeply influencing its structure.

What has changed, however, is not just the quality of the models behind conversational AI, but the expectations surrounding them, because users no longer see these systems as optional helpers but increasingly treat them as primary entry points into a product, expecting them to understand intent, navigate complexity, and produce meaningful outcomes rather than just responses. This shift has created a situation where conversational AI is no longer a feature that can be neatly isolated from the rest of the system, but instead becomes a layer that connects multiple parts of it, shaping how users interact with data, how workflows are triggered, and how decisions are ultimately made.

The Subtle Transition from Interface to System Layer

One of the reasons this transformation is often underestimated is that it does not happen abruptly, but rather unfolds gradually as the system becomes more capable and more embedded into everyday usage, which makes it easy to overlook the point at which conversational AI stops being an interface improvement and starts influencing the internal mechanics of the product.

At first, the value appears to be purely experiential, because users can express their intent more naturally, avoid navigating complex menus, and receive faster responses, all of which contribute to a smoother interaction but do not necessarily require fundamental changes to how the product is built. Over time, however, as conversational systems begin to interact with more data sources, trigger actions across multiple services, and handle increasingly complex requests, they start to act less like a layer that sits on top of the system and more like a layer that actively orchestrates it, which introduces a new level of dependency between the AI and the underlying architecture.

This is the point where many businesses begin to encounter unexpected challenges, because the system they initially built to support a limited set of interactions is now being asked to handle dynamic, context-dependent workflows that were never part of the original design assumptions.

Why the Business Case Keeps Strengthening

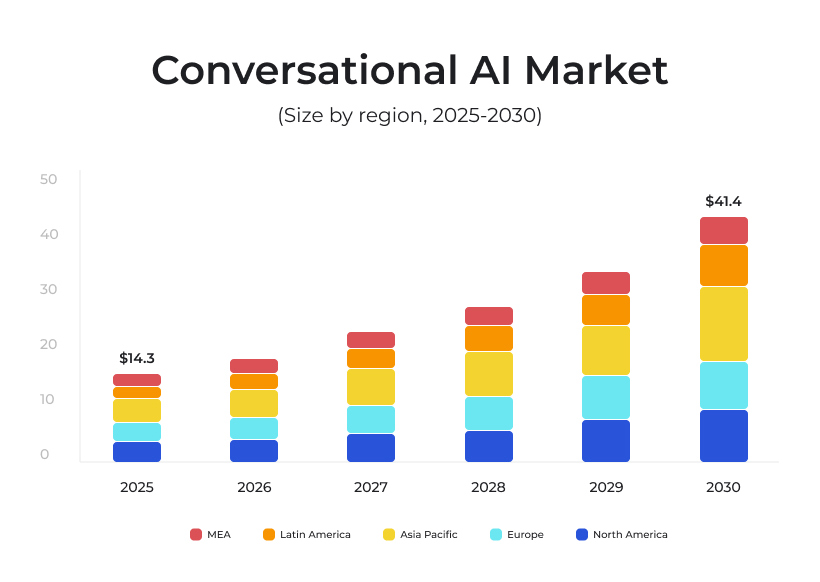

The continued investment in conversational AI is not driven by hype alone, even though the hype is certainly present, but by a very practical set of pressures that modern businesses are facing, where the volume and complexity of interactions have grown to a level that traditional approaches struggle to handle efficiently.

Customers expect immediate responses, personalized experiences, and seamless transitions between different stages of their journey, while internal teams are expected to operate faster, coordinate across more systems, and make decisions based on increasingly complex data. Conversational AI addresses these pressures in a way that is difficult to replicate with purely human-driven processes, because it can handle large volumes of interactions simultaneously, maintain consistency across responses, and operate continuously without the constraints that limit human teams.

At the same time, it introduces a different kind of scalability, where increasing demand does not necessarily require a proportional increase in resources, which fundamentally changes how businesses think about growth, cost structures, and operational efficiency.

Where the Real Value Emerges

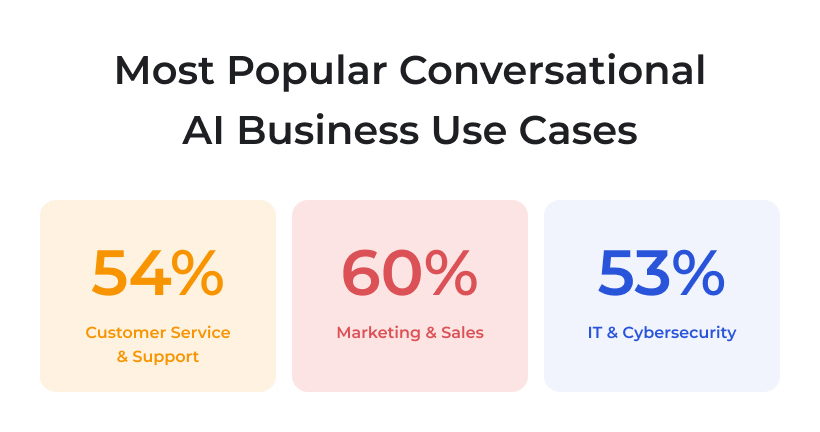

Although conversational AI is often associated with customer support, its most significant impact tends to appear in areas where interaction, data, and decision-making intersect, because those are the points where even small improvements can have a disproportionate effect on overall performance.

In sales processes, for example, conversational systems can reduce friction by answering questions instantly, guiding users toward relevant options, and qualifying leads based on their behavior, which shortens the time between initial interest and meaningful engagement.

In onboarding scenarios, they can help users understand not just how a product works, but how it fits into their specific context, which reduces confusion and increases the likelihood of long-term adoption. Internally, conversational AI can act as a unifying layer that allows teams to access information, trigger workflows, and coordinate actions without constantly switching between different tools, which simplifies operations and reduces cognitive load.

Perhaps most importantly, every interaction generates data that reflects real user intent, which provides insights that are often more actionable than traditional analytics, because they reveal not just what users do, but what they are trying to achieve.

The Problem with Early Success

One of the most deceptive aspects of conversational AI projects is how convincing early success can be, because a system that performs well in a controlled environment can create a strong sense of confidence that does not necessarily translate to real-world conditions. During pilot phases, data is typically cleaner, workflows are simplified, and edge cases are limited, which allows the system to operate under conditions that are far more predictable than those it will encounter at scale. As soon as the system is exposed to a broader range of inputs, including incomplete data, inconsistent formats, and unpredictable user behavior, the assumptions that supported its initial performance begin to break down, often in ways that are not immediately obvious.

What makes this particularly challenging is that the resulting issues are rarely attributed to the correct source, because it is easier to assume that the model needs improvement than to recognize that the surrounding system is not equipped to support the level of variability it is now facing.

The gap between successful pilots and scalable systems is one of the most persistent challenges in AI adoption, and it is often rooted in the tendency to focus on the model rather than the system as a whole. Improving model performance is a logical step, and in many cases it is necessary, but it does not address the underlying issues that emerge when the system grows, such as data inconsistency, integration complexity, and the lack of structured feedback loops.

These issues become more pronounced as the product scales, because the system is required to handle a wider range of scenarios, interact with more components, and maintain consistency across a larger number of interactions. At that point, the limitations are no longer technical in the narrow sense, but systemic, because they arise from how different parts of the product interact with each other rather than from the capabilities of any single component.

As conversational AI systems grow, data becomes both more valuable and more difficult to manage, because it evolves from a relatively static input into a dynamic and constantly changing layer that influences how the entire system behaves. In early stages, data is often curated and structured in a way that supports the intended use case, but as real users begin to interact with the system, variability increases, and the data becomes more reflective of real-world conditions, which are inherently less predictable. This introduces challenges related to consistency, quality, and relevance, because the system must now process inputs that do not conform to the patterns it was originally trained on, which can lead to fluctuations in performance and confidence.

Addressing these challenges requires a shift in how data is treated, from something that is prepared for the model to something that is actively managed as part of the system, with mechanisms for validation, normalization, and continuous monitoring.

Human Expectations and System Behavior

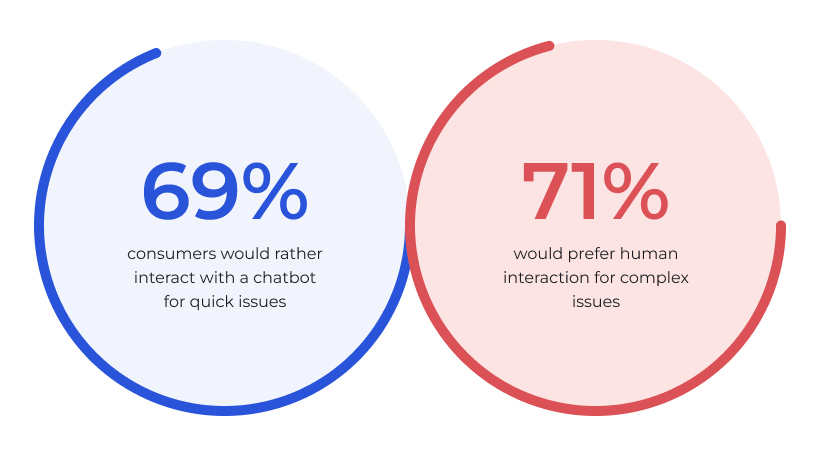

The success of conversational AI is closely tied to how users perceive and interact with it, which means that technical performance alone is not sufficient to ensure adoption. Users expect these systems to be responsive, accurate, and helpful, but they also expect them to be understandable, predictable, and aligned with their needs, which creates a set of requirements that extend beyond traditional measures of performance.

Balancing these expectations is not straightforward, because increasing flexibility can reduce predictability, while increasing control can limit usefulness, and finding the right balance requires careful design and continuous adjustment. Trust plays a central role in this process, because users are more likely to rely on a system that behaves consistently and transparently, even if it is not perfect, than on one that appears more capable but less reliable.

As conversational AI becomes more integrated into products, its role expands from facilitating interactions to coordinating actions, which represents a significant shift in how it influences the system. Instead of simply responding to user input, it begins to trigger workflows, connect data across systems, and support decision-making processes, effectively acting as a bridge between different parts of the product. This transformation increases the potential value of conversational AI, but it also increases the complexity of implementing it effectively, because the system must now handle not just communication, but coordination. At this stage, the quality of the surrounding architecture becomes critical, because it determines how smoothly the system can operate and how well it can adapt to changing conditions.

What Founders Should Pay Attention To

For founders and decision-makers, the key challenge is not whether to adopt conversational AI, but how to position it within the product in a way that allows it to deliver meaningful value. This requires a shift in perspective, from thinking about conversational AI as a feature to understanding it as a component that interacts with multiple layers of the system. It involves considering how data flows through the product, how workflows are structured, how decisions are made, and how responsibility is distributed, because all of these factors influence how the system behaves at scale.

It also requires a willingness to invest in areas that may not be immediately visible, such as data infrastructure, integration design, and feedback mechanisms, because these are the elements that support long-term success.

Conversational AI is often described in terms of what it does, such as answering questions or assisting users, but its real significance lies in how it changes the way systems operate. By introducing a layer that connects interaction, data, and decision-making, it creates new possibilities for efficiency, scalability, and user experience, but it also introduces new challenges that must be addressed thoughtfully.

The businesses that succeed with conversational AI are not necessarily those that adopt it first, but those that understand how it fits into the broader system and design for that integration from the beginning. Because in the end, the value of conversational AI is not determined by how well it can talk, but by how effectively it can support the complex processes that define modern digital products.